Generative AI tools like Midjourney have changed how creatives are produced; however, when it comes to advertising environments, not all AI is created equal. This article compares MGID GenAI to Midjourney, explaining why general-purpose image generators fall short for advertisers.

Creating an image has never been faster with generative AI. A prompt goes in, a polished visual comes out, perfect for inspiration and rapid experimentation.

However, advertising adds constraints that general AI creative tools don’t account for. A visual that looks strong in isolation may not fit native placements, may require resizing or fail moderation. In performance environments, generation is only the first step, but deployment determines results.

MGID GenAI approaches image creation from the reality of advertising, producing visuals designed for placements rather than standalone artistic output.

To understand the difference, it’s important to look beyond image quality and examine how each platform approaches creative generation within the context of real advertising environments.

What is Midjourney and What It’s Designed For

Midjourney is a generative image tool built to turn text prompts into visuals. It doesn’t live inside ad platforms, dashboards or performance metrics. Its core value is creative speed, exploring visual ideas faster than traditional design workflows allow.

For marketers, this makes Midjourney especially useful at the idea stage. Within minutes, teams can explore multiple visual interpretations without briefs, queues or production delays.

Where Midjourney Fits Naturally in Marketing Workflows

Midjourney works best when the task is creative discovery.

Typical use cases include:

- generating visual directions for campaigns;

- exploring styles before committing to production;

- creating moodboards or concept previews;

- producing draft visuals for internal discussions or early tests.

In these moments, precision and compliance matter less than volume and variation. The goal is to discover what’s possible, and that is where Midjourney shines.

What Midjourney Does Not See

Once an image leaves Midjourney and enters a live campaign, it becomes subject to forces the tool has no access to.

It doesn’t know:

- which formats the image will need to fit;

- whether the layout works in small native placements;

- if the visual meets platform compliance standards;

- how headlines and visuals will coexist in a native ad block;

- whether the image is optimized for advertising usability.

From Midjourney’s perspective, every image is complete at the moment of generation. From an advertiser’s perspective, that’s when the real work begins.

The Operational Gap for Advertisers

Using Midjourney in paid advertising introduces a new (and manual) workflow loop:

- Generate images via prompts.

- Export them.

- Resize and adjust for ad formats.

- Check compliance.

- Re-upload revised assets to the advertising platform.

This loop works, but it doesn’t scale. As campaign volume grows, the amount of manual adaptation increases. What starts as fast generation often turns into operational overhead before launch.

This isn’t a limitation of Midjourney, but rather, a reflection of its original purpose: exploration.

To understand how advertising-native AI approaches image generation differently, we need to look at MGID GenAI, a system built to create visuals that are ready for real advertising environments.

What is MGID GenAI?

MGID GenAI is a set of AI-powered capabilities built directly into the MGID platform, operating alongside campaign setup, targeting and asset management tools.

While most generative tools begin with a prompt focused on aesthetics or mood, MGID GenAI begins with advertising requirements: placement logic, format constraints and usability within native environments. MGID’s creative generation is shaped not only by ideas but also by practical deployment needs.

From Image Generation to Ad-Ready Assets

MGID GenAI is built around real advertising production needs. Instead of generating standalone visuals that require additional adaptation, it creates assets structured specifically for native environments.

Images are:

- generated in correct formats and aspect ratios;

- composed for thumbnail visibility and feed-based placements;

- structured to support headline integration;

- aligned with platform guidelines before reaching the dashboard.

Beyond visuals, MGID GenAI can also generate supporting elements such as titles and calls to action that match native ad formats. Clear AI labeling and built-in review processes help reduce friction before launch. The result is a creative ready for immediate campaign use.

| Want a deeper look at MGID’s generative tools? Explore our complete guide to MGID GenAI features, where we break down text-to-image, image-to-image generation, AI-powered ad copy and performance prediction in detail. |

|---|

MGID GenAI vs. Midjourney: Purpose and Design Differences

On the surface, both Midjourney and MGID GenAI generate images from AI models. The difference lies in design philosophy and intended use.

- Midjourney is built for creative exploration. It prioritizes artistic interpretation, visual richness and stylistic experimentation.

- MGID GenAI is built for advertising environments. It prioritizes clarity, placement compatibility and ad-format alignment.

| Midjourney | MGID GenAI | |

|---|---|---|

| Primary focus | Artistic visual generation | Advertising-oriented visual generation |

| Context awareness | None | Native ad placement logic |

| Output type | Standalone image | Ad-ready visual asset |

| Format optimization | Manual | Built-in |

To understand these differences fully, let’s look at how both tools respond to the same creative ideas across different scenarios.

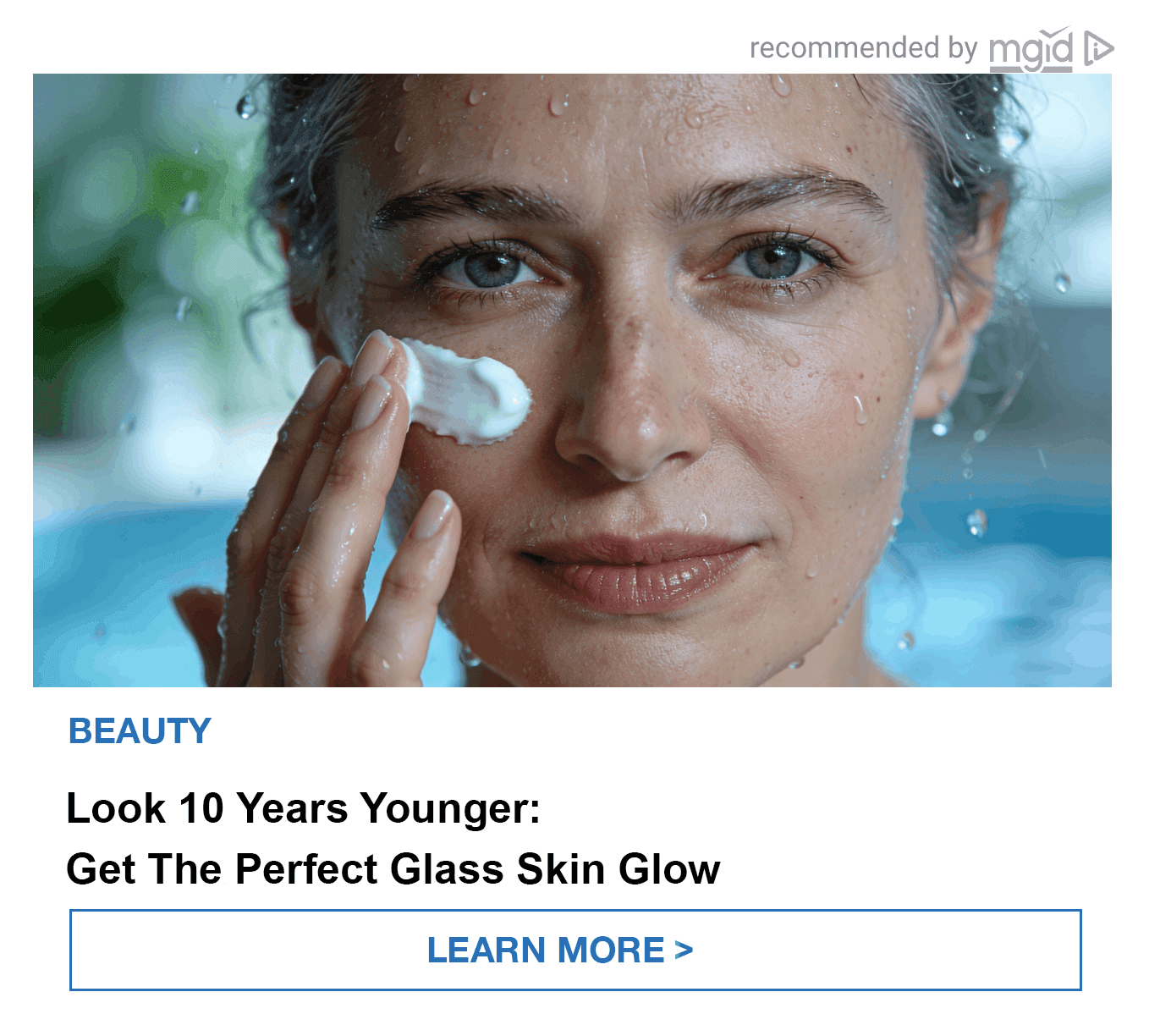

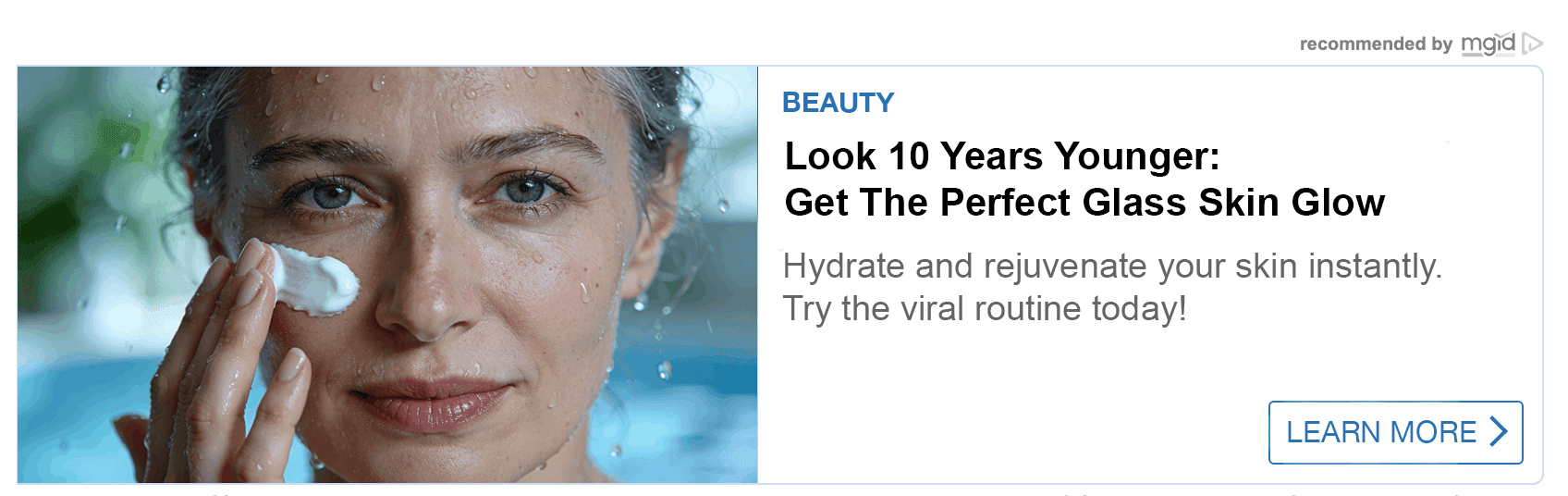

Example 1: Evergreen Lifestyle (Skincare / Anti-Aging)

| Product | Creative goal |

|---|---|

| Moisturizing cream or hyaluronic acid serum | Move away from artificial “studio-perfect” beauty while maintaining a premium aesthetic. The skin should feel alive: hydrated, breathable and real. |

Midjourney Output

Typical result: While technically correct, this image is emotionally flat. The subject is static with skin that appears overly smoothed or matte. There is no visible sensation of hydration, freshness or product action. The image looks like a stock photo rather than a performance-driven ad creative.

MGID GenAI

For this scenario, MGID employs the “Sensory Texture” logic. Instead of simply showing product usage, the visual emphasizes how the skin feels. The wet-skin effect and visible water droplets create associations with purity, freshness and vitality. Hyper-realistic texture, including visible pores, avoids artificial, over-smoothed beauty standards while maintaining a premium aesthetic. The result is a visual that communicates hydration as a physical sensation.

Rather than being just visually attractive, the result creates a tactile sensation that performs better in native placements.

Below, you can see how MGID GenAI creatives appear inside real native ad widgets across mobile and desktop formats.

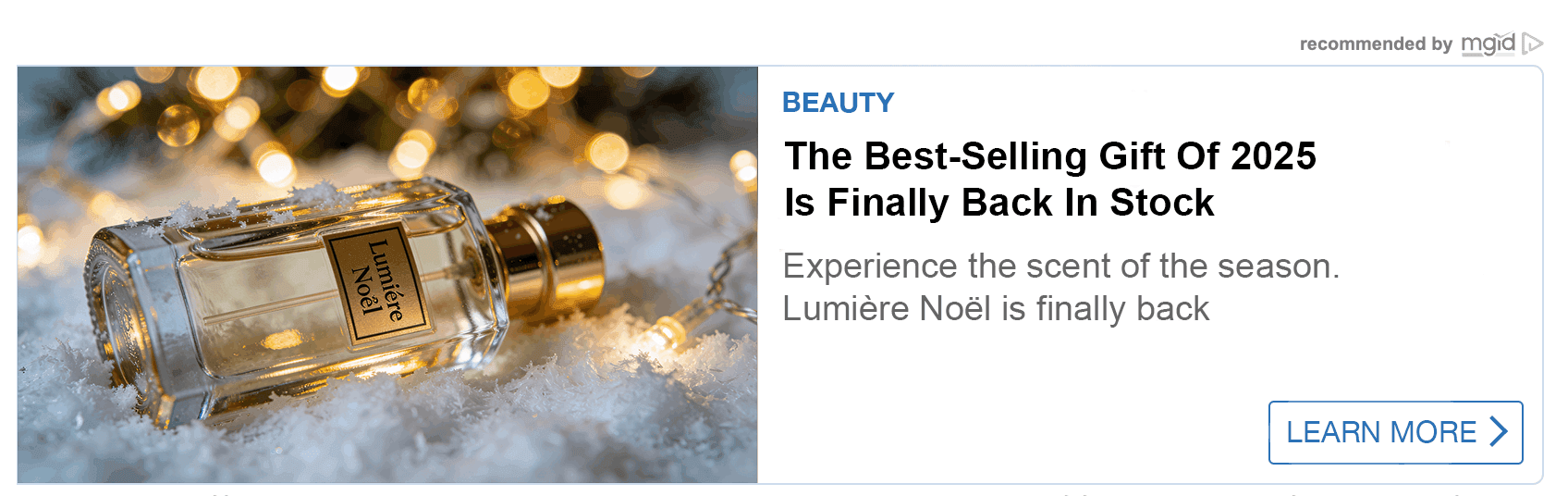

Example 2: Seasonal Creative (Winter Holiday Gifts)

| Product | Creative goal |

|---|---|

| Perfume or premium cosmetics | Present the product as a desirable holiday gift by integrating it into a magical winter atmosphere, rather than just placing it near decorations. |

Midjourney Output

Typical result: What is generated is a static still-life composition. The bottle and decorations exist separately. The image resembles a catalog photo, which is visually correct but emotionally neutral. It lacks depth, focus and premium gift appeal.

MGID GenAI

For this scenario, MGID uses the “Product Hero” logic. The product becomes the emotional and visual center of the composition rather than a background object. Festive golden bokeh and cinematic light reflections are integrated into the glass surface, surrounding the bottle with a sense of magic and exclusivity. This approach transforms a static still-life into a premium, gift-driven narrative designed to trigger desire.

Instead of documentation, the image creates aspiration, which is critical in seasonal performance campaigns.

As with the previous example, below you can see how this creative appears inside real native widgets across mobile and desktop placements.

Example 3: Product-Focused Creative (Health / Supplements)

| Product | Creative goal |

|---|---|

| Joint support capsules or multivitamins | Shift from passive packaging shots to an active, curiosity-driven visual that highlights the product’s perceived innovation. |

Midjourney Output

Typical result: While informative and a functional product image, this image looks like a generic catalog listing and fails to create a curiosity gap. Its passivity fails to trigger an emotional response or implies innovation.

MGID GenAI

For this scenario, MGID uses the “Tactile Focus” logic. The capsule is shown held between fingers to create a first-person connection, as if the viewer is about to take it. The transparent shell filled with visible granules or liquid suggests innovation and potency, while light passing through the pill enhances the perception of advanced formulation. This shifts the creative from passive packaging display to an active, curiosity-driven moment.

The result creates curiosity and perceived innovation, which are essential drivers for click-through rates in health verticals.

Here’s another example of how the creative looks within actual native placements across devices.

How MGID GenAI Approaches Creative Variation

In advertising, creative variety is essential for testing and placement diversity. MGID GenAI supports image variation at scale, allowing advertisers to generate multiple ad-ready visual versions based on a single concept.

Instead of manually rewriting prompts and adjusting compositions, advertisers can produce variations aligned with proven layouts and native advertising standards. This makes it easier to test different visual angles and maintain structural consistency.

When using MGID GenAI, the focus shifts from generating a single “perfect” image to building a controlled set of variations suitable for campaign use.

From Image to Ad: Workflow Differences

The difference between the tools becomes more visible after image generation.

Midjourney workflow

- Image is generated

- Exported manually

- Adapted to required ad formats

- Checked for compliance

- Uploaded to advertising platform

MGID GenAI workflow

- Image is generated inside the advertising platform

- Automatically aligned with native format requirements

- Reviewed according to platform policies

- Ready for campaign launch

| Midjourney | MGID GenAI | |

|---|---|---|

| Produces high-quality visuals | ✅ | ✅ |

| Built for advertising placements | ❌ | ✅ |

| Alignment with platform guidelines | ❌ | ✅ |

| Designed for campaign use | ❌ | ✅ |

Key Differences: MGID GenAI vs. Midjourney

The difference between MGID GenAI and Midjourney lies in its operational design. Both generate high-quality images, but they exist at different points in the advertising lifecycle.

The table below summarizes where those differences become most visible in practice.

| Dimension | Midjourney | MGID GenAI |

|---|---|---|

| Core purpose | Visual creation and inspiration | Advertising-oriented image generation |

| Generation trigger | Manual prompt | Creative brief + ad constraints |

| Position in workflow | Before launch | Creative production stage |

| Creative replacement | Manual | Rapid variation generation |

| Output readiness | Raw image | Ad-ready, moderated creative |

| Scaling effort | Increases with volume | Reduced adaptation effort |

Why Purpose-Built GenAI Outperforms Generic Tools in Advertising

Advertising operates under constraints that most creative tools do not consider: placement rules, compliance standards, layout limitations and conversion-driven design. Generic generative AI models optimize for aesthetics. Purpose-built advertising AI optimizes for contextual usability within those constraints.

In practice, a successful advertising image must do more than look appealing. It must:

- fit native placements;

- remain clear in small formats;

- support strong headlines;

- align with compliance requirements;

- maintain visual focus on the value proposition.

Meeting these requirements consistently is a structural task, and. the structural distinction between generic and purpose-built GenAI becomes clearer when comparing their capabilities.

| Generic GenAI | Purpose-built GenAI | |

|---|---|---|

| Learns from prompts | ✅ | ✅ |

| Understands artistic styles | ✅ | ✅ |

| Understands ad formats | ❌ | ✅ |

| Generates placement-ready assets | ❌ | ✅ |

When Midjourney is the Right Choice

It works best for:

- early-stage concept development;

- visual experimentation before launch;

- small teams testing ideas manually;

- campaigns where assets are adapted manually before launch;

- creative work that doesn’t require constant rotation.

In these cases, manual oversight is manageable, and the cost of delayed reaction is relatively low.

When MGID GenAI Becomes the Better Fit

MGID GenAI becomes the better fit when advertising readiness and scalability matter. It is particularly relevant for teams that:

- launch native advertising campaigns regularly;

- require creatives aligned with platform formats;

- want to reduce manual adaptation work;

- prioritize production efficiency in paid environments;

- need compliance-aware visuals from the start.

Conclusion

Midjourney and MGID GenAI operate at different stages of the same creative process: Midjourney accelerates ideation, while MGID GenAI accelerates deployment.

In advertising, the real advantage comes from visuals that move seamlessly from creation to launch without additional adaptation, delays or operational friction.

FAQ

Is Midjourney suitable for advertising creatives?

Midjourney can help generate visual concepts, but it requires additional adaptation, formatting and compliance checks before those visuals can be used in paid advertising environments.

What makes MGID GenAI different from general GenAI tools?

MGID GenAI is designed specifically for advertising environments, generating visuals that align with platform formats, moderation standards and native placement logic.

Do MGID GenAI creatives require manual moderation?

No, MGID GenAI creatives are generated according to MGID platform guidelines, reducing the risk of moderation issues and shortening preparation time before launch.